Links

Tags

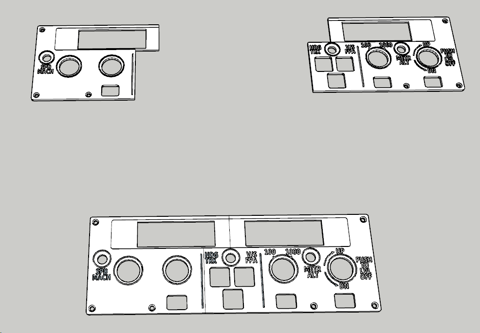

Back Plates, Face Plates and Korry Switches for an Airbus A320

With 3D printing comes a lot of trial and error, and those ends of spools you have a few metres left on certainly come in handy for playing about with sizes. I went into printing small components with eyes wide open - you're battling heat, filament width and height, speed and a number of other factors that make printing with plastics quite difficult.

Designing and Printing the Back Plate

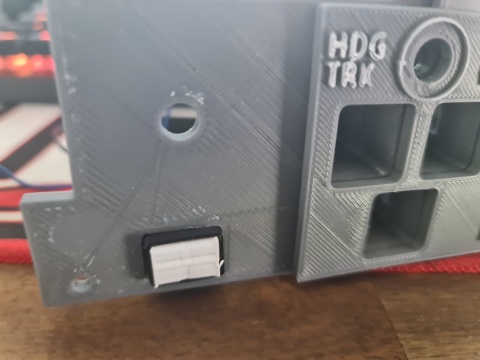

Following on from the front plate last week, I started designing the back plate such that while it'll also need to be split but this time into thirds. This will help with some stability given there's only 6 holes to loosely match the design of the real thing. Similarly, I'd need to ensure the LCD screens would fit in place and Rotary Encoders will have something to grab onto. The holes themselves for holding the Korry switches in would need to be a bit smaller as I had originally planned to have the buttons themselves standalone.

With some printing and brass inserts to hold the faceplate in place, it looks like I'm on the right track.

After the initial "awww..." moment, you can start to quickly see some faults. The first is that in this particular design, I'm using 2x 1602 LCD Screens. They have a large(ish) PCB around them. While I had factored in the top, part, I hadn't factored in the bottom part as I was drawing in the Rotary Encoder holds. This is probably where modelling the entire thing upfront would have saved some time...

There's also what appears to be a burn mark in between where the LCD screens will fit so I thought I'd better re-level the 3D printer and print again anyway. I don't have any pictures of it, but it largely looked the same and the LCD screens fit flush now. I'd then embark on a journey to build a smaller Korry switch - similar to that tested in the previous post. After several prints, it was clear that the smaller Korry would be too small to fit either a 5050 SMD LED or a 3mm LED bulb so I had to ditch the idea and build the external enclosure as part of the back plate. This process would waste a few days, but we eventually got there. This had to be printed with the button side up, so there was a lot of support material / waste to clear out too.

Now that we have an external enclosure for the Korry switches, it's time to test a few possible options.

Finalising the Korry Switch

Using the same dimensions as present in the new backplate, it was time to re-do the design. The main challenge and re-do here was getting the right size appropriate for the hole. When working within 1mm tolerances, you tend to fight both the printer and design. After 3-4 goes, we've got a good fit and button action (albeit the buttons themselves are kind of garbage).

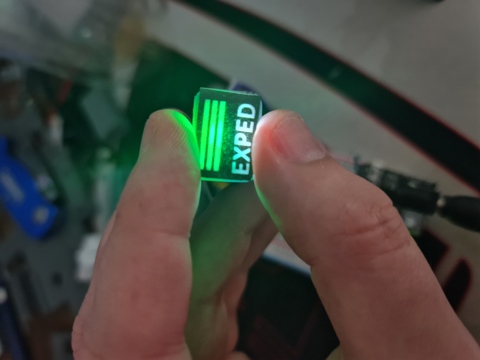

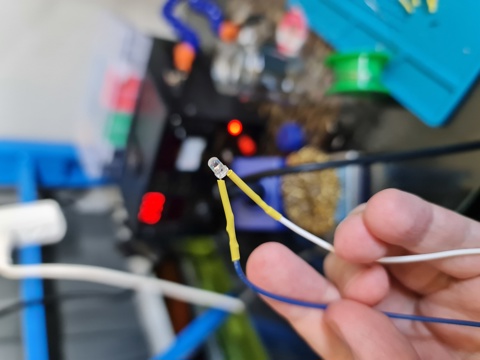

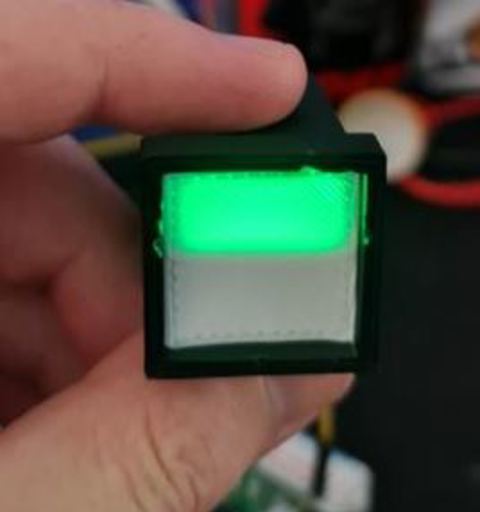

This... took quite a few goes (the diffuser, that is). Inside the chamber is a button and an insert. Green and White 3mm LEDs sit inside a small white diffuser thing, similar to the original Korry design. All that's left now is to solder up a bunch of LEDs, wires and buttons to fit inside of the FCU.

After getting the LEDs all installed and buttons all set up, it's time to put it in the main face plate and the result is pretty good. Still plenty of work to do and some transparent sheets to play with.

Designing Korry Switches, Rotary Encoders and a Face Plate for an Airbus A320 Controller

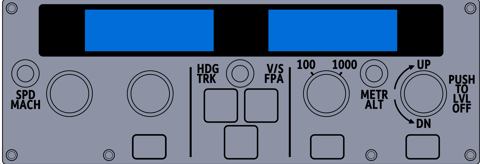

The journey to build a Custom Flight Controller is only just beginning. As mentioned in my previous post, the main component I'm focussing on is getting the Flight Control Unit replicated. This component controls the crucial navigation elements of your flight - namely Speed, Heading, Altitude and Vertical Speed. There are some extra functions available that turn on and off Auto Pilot and Auto Thrust, as well as some expedite buttons and localiser buttons to replicate. There are several buttons to replicate:

- Korry Switches for the buttons in two sizes - 3 large, 3 small

- Rotary Encoders with Push and Pull buttons (most rotary encoders either don't have a button, or have a push only setup)

- 3 Push Buttons for the top layer

- Display with several status lights, ideally with 7 segment displays

For this first prototype in 3D printing all the parts, I have a couple of 1602 LCD screens that will be incorporated into the design instead of the 7 segment displays with lights. This will drastically simplify the wiring - as there are less LED lights and components to make. As I progress, I'm seeing a CNC (possibly with laser) in my future to make things fit better (and the ultimate goal to be backlit).

Korry Switch Design

The Korry switch design on the Airbus A320 requires two status lights for a single push button. Several options exist if you do a casual YouTube or Yeggi search - I've decided though to give the design a bit of a go.

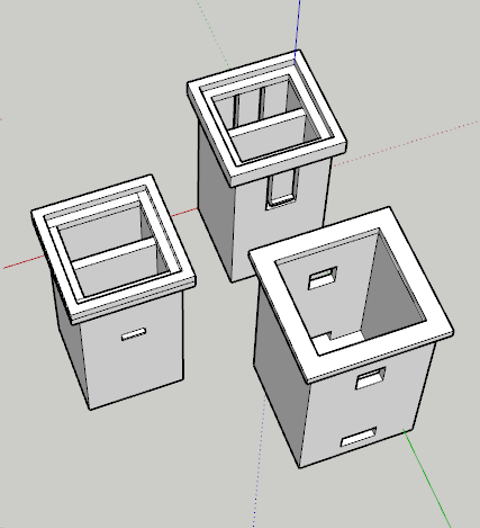

With a bit of tweaking and some test prints, the design that has worked the best includes the outer shell (bottom right) and the inner shell (left). Inside the inner shell, I'll place a single LED light in each chamber. These will be from some of the leftover WS2812b strip I had left over from the Christmas Lights display. These are thin and stupidly bright, so they'll penetrate white plastic fairly easy for this project. I'll also print something of a paper face (maybe a transparent inkjet sheet in future) and do some testing. At the bottom of the outer shell will be a Push button. The 'spring' mechanism in the button will push back on the plastic returning it to the off position and those clips will prevent the button from pushing straight out again. After 3 test prints, and some adjustments to the position of the holes and clips, I'm pleased with the result.

With a bit of tidy up, a transparent sheet and better paper - these switches will fit nicely in the design.

Rotary Encoder with Push and Pull Action

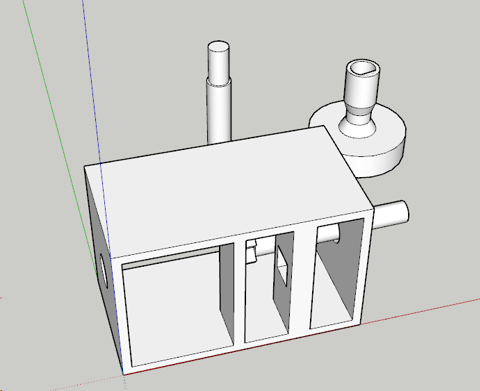

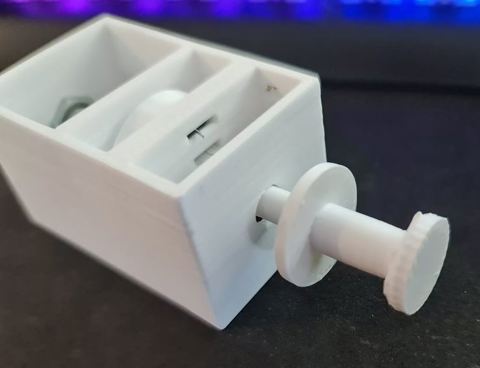

This one was a little more complicated, and a design I'll probably work on - possibly with Cherry MX switches (or clones there-of). These rotary encoder clones are plentiful on YouTube with plenty of ideas. I can't say I'm bringing much new to the table here, only that I'll be designing these to fit in the cockpit I intend to build. This one took a few more goes at the design but the concept is again fairly simple.

The Rotary Encoder will have a push button. If it didn't, I could still achieve the same with a second Push Button but let's not waste what's already available. That leaves the pull action that will need to be activated somehow. With a loose fit on the encoder - tight enough to turn, loose enough to pull, I should be able to build a mechanism that will - when pulled - activate a push button inside the container. Finally - a stalk that will allow the rotation to occur will be required so the user can set their intended Speed, Heading, Altitude or Vertical Speed.

This took a few goes to getting the position and wheel size right. With a digital calliper, this makes the job easier and to fine-tune it, since it uses about 1m-2m of plastic to print any of the components, I figure it's easier to print, then measure with the calliper as to what I need to change.

Success! The action works, to a point but the "pull" button is probably not as nice as it could be. So there might still be further modification here before it goes live. Many others use either momentary toggle switches or the larger 12mm square buttons. I have a draw full of different sizes, so we might try something in that space before settling, but definitely some promise here.

For the 100/1000 selector, I've got a few choices in microswitches or toggle switches to try, but these'll come in a future post.

A320 FCU Panel

In designing this one, I note there's a severe lack of vector-image importers for Sketchup Make 2017. My go-to vector tool for years has been Adobe Fireworks which last received an update around CS6. I have a fairly old version of the product but I'm proficient enough in it that vector images are straightforward for me. After some research, I estimate the size of the panel to be 260mm x 90mm. This means that my 200mm x 200mm bed isn't going to fit it all in one. I could do it horizontally if I had the right supports, but I've not yet been successful on my 3D Printer in doing that.

In Fireworks, I prepared a 2600px x 900px vector image and exported it to a format that through some online conversion tools to some pretty legacy formats, got a vector file that I could import into Sketchup. It's not a pretty workflow by any means, and something that probably requires a dedicated post when I can iron out the nuts and bolts of it in something digestible. This Vector file is layered so those colours etc... are visual layers rather than anything particularly useful.

I mentioned earlier that I'd use 2x 1602 character displays and those have been drawn in here instead of the 7 Seg display I'll do at some point in the future. I had to increase the text size to the 5mm in height as identified in the previous post so that it would be legible on the printout. After importing the vector file into Sketchup, the next step was to determine where the 'cut' line will be. I decided to do that on the left part, and straight up the middle of the LCD.

So... now the hard part - determining the best offsets. I settled on 3.5mm for the standard height and an extra 1mm for the text and outlines (which will be painted white after print). So that's what I did. I loaded the printer with some Grey ABS plastic and out comes two pieces albeit slightly warped.

At this stage, I'm pretty happy with how it's coming along, and borrowing a tip from Heli Mech's fantastic video on building similar for a Boeing 737, I bought myself a white paint pen and painted the raised text. And I must say, the results are brilliant - for a 3D Printed job.